hallucination in the LLM-based Kagi Translate

- 4 minutes read - 776 wordsYou don’t have to spend long on my blog to figure out that I default to being grumpy about generative AI, but if I’ve made one exception to that rule, it’s for Kagi Translate, which I’ve found to be a genuinely helpful machine translation tool—and to have some neat features that I haven’t found in its Google or DeepL equivalents.

It took me back a little bit tonight, then, when Kagi Translate straight up hallucinated something on me, in a way that I imagine wouldn’t be out of place for a more mainstream LLM (which I’ve never really used). Earlier today, while working on a paper for an upcoming conference, I was consulting a Jacques Ellul book I was about to cite, and I wanted to make sure that “genetic engineering” would be an accurate translation for his phrase « intervention génétique » (which could obviously also be rendered “genetic intervention,” but I’ve never heard that phrase in my life, so I’d prefer to go with a more well-known phrase if it’s accurate).

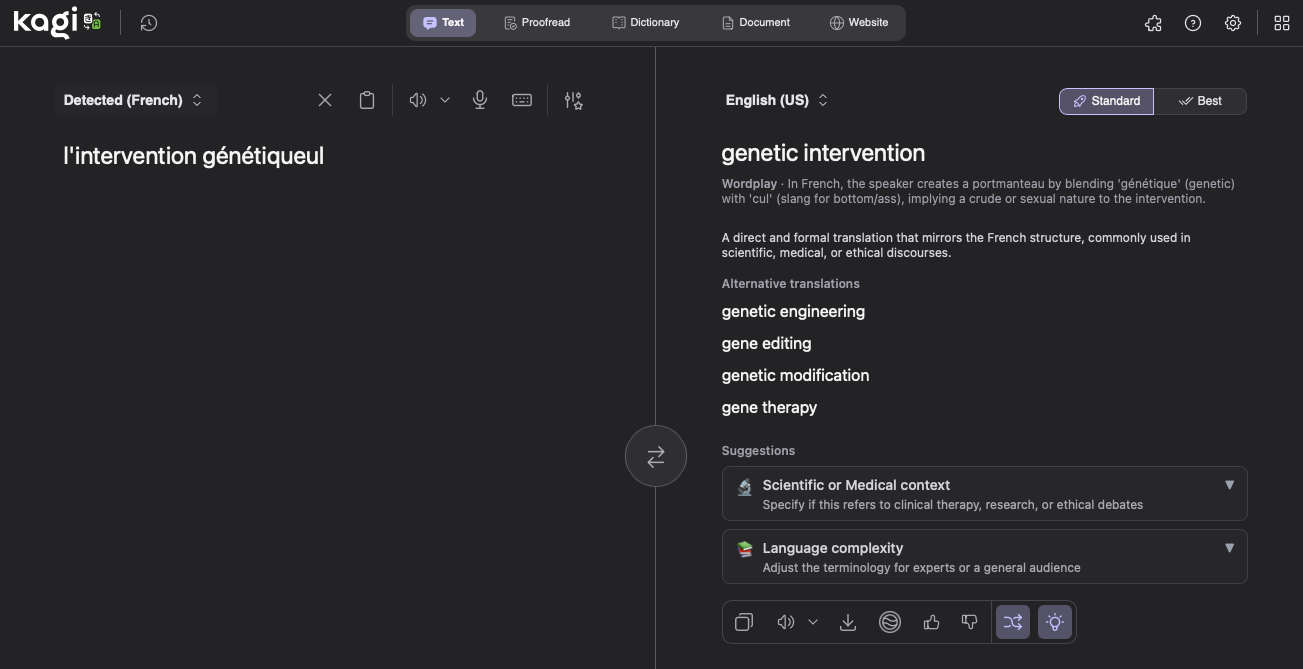

Anyway, “genetic engineering” was the first thing that Kagi Translate offered (along with a handful of other suggestions), so I felt vindicated in writing that in my paper and moving on. I left the tab open in my browser and went on to other things, forgetting about the exchange. However, while sitting back down at my computer tonight, I had one of those complex fat finger experiences where I started typing something into one interface (my application launcher) but somehow closed it partway through, causing the rest of what I was typing (the letters “ul”) to be appended to whatever was frontmost in the system, which happened to be Kagi Translate. This led to the scene below, where I appear to have asked Kagi to tell me what the English translation of the (nonexistent) French phrase « l’intervention génétiqueul » is.

It is perhaps to Kagi’s credit that the tool figured out what the underlying query was and still gave some valid translations for the correct French phrase that served as the basis of my frankenphrase, but it is not at all to the tools credit for what it read into the translation. Here’s some “helpful” context that Kagi provides for the phrase “l’intervention génétiqueul”:

Wordplay - In French, the speaker creates a portmanteau by blending ‘génétique" (genetic) with ‘cul’ (slang for bottom/ass), implying a crude or sexual nature to the intervention.

Not only is this very clearly not what I’m doing, but if I remember what I learned in my French phonetics course from college (though I’m happy to be corrected here), this isn’t even good wordplay off of « cul ». Rather, « queuel » ought to rhyme with (or rather be in assonance with) « queue », which could lend itself to other ribald wordplay (when the latter word is used as slang for “penis”), but is not pronounced the same way as « cul », as I once learned the hard way (when trying to use it in its more standard definition of “line” as in “standing in line”). In fact, as I keep trying to wrestle with this fake word (which is ridiculous—again, it’s the result of fatfingering something), the best wordplay I can think of is that some Francophone person started using « génétiqueul » as an equivalent to the English word “genetical” (which is so uncommon that I wasn’t sure it was actually a word until I just looked it up).

As I alluded to in the quick post I wrote after first discovering Kagi Translate, it’s primarily the question of digital labor that bothers me about generative AI, so I could potentially forgive one hallucinated (and, admittedly, amusing) “explanation” of non-existent wordplay. However, seeing such a brazen error has forced me to again confront that this tool that I like is built on technologies that I am very much wary of. What’s more, a single error is one thing, but one of the things to keep in mind about generative AI is the scale at which it is being deployed. To riff off of a recent Ars Technica article, 90% accuracy might sound good until you realize that the scale of Google’s AI Overviews means “hundreds of thousands of lies going out every minute of the day.”

This is more thought than I meant to give this episode (if the screenshot weren’t so unwieldly, I’d have done a 200ish-character photoblog with it instead, since I went through all the effort to make that a thing), but I did want to share it, if only to force myself to think through all of this some more.

similar posts:

Torn between enjoying Kagi’s machine translation tool and grumpiness about using something LLM-based. Still can’t get over digital labor, but I might(?) be less hostile to generative AI if it were more often deployed in these targeted, careful ways instead of as get-rich-quick chatbots.

what I dislike about AI isn't the tech (and why I like Ellulian 'technique')

Je compte bientôt préparer un document professionnel en français. Il y a cinq ans, j’aurais demandé à quelqu’un de m’aider à corriger des erreurs. En 2025, par contre, je me demande si mes petites erreurs éventuelles feront preuve que j’ai tout écrit moi-même au lieu de me fier à l’IA.

Jacques Ellul and success as the only techbro metric

Stack Exchange and digital labor

comments:

You can click on the < button in the top-right of your browser window to read and write comments on this post with Hypothesis.