Below are posts associated with the “generative Ai” tag.

🔗 linkblog: Reddit Issuing 'Formal Legal Demands' Against Researchers Who Conducted Secret AI Experiment on Users

WAIT. They prompt engineered the AI tool to disregard informed consent and ethical concerns?

🔗 linkblog: Duolingo will replace contract workers with AI

I have already been skeptical about Duolingo (as a company—the app is mostly not bad) for a while, but this is the sort of thing that makes me want to find an alternative for kiddo to use fast.

🔗 linkblog: Who Ordered That? On AI, Education, and the Illusion of Necessity | Punya Mishra's Web

I would be more critical of generative AI than Punya, but this is a solid, important argument.

🔗 linkblog: The Man Who Wants AI to Help You ‘Cheat on Everything’

Everything in this article makes me sad.

DuckDuckGo and IP geolocation (with a MapQuest and generative AI tangent)

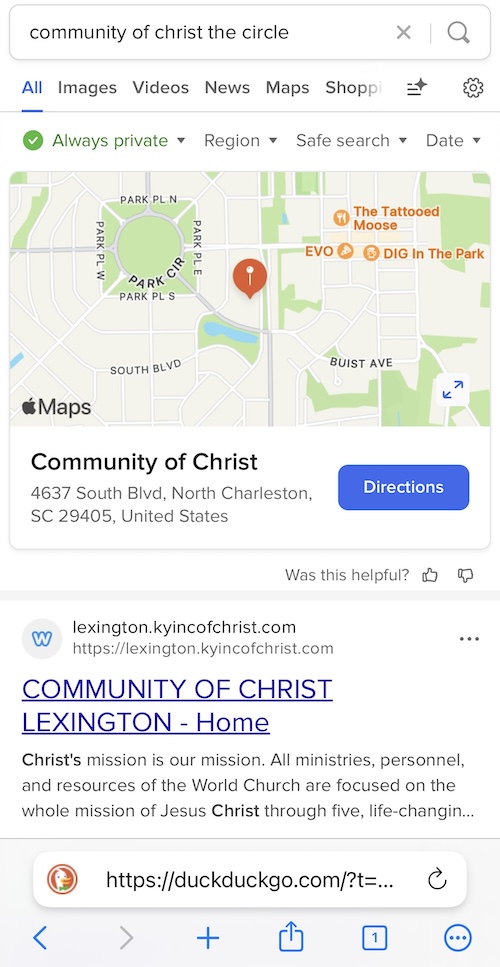

I don’t know if this is a DuckDuckGo thing or an underlying Bing thing, but I’ve started noticing something weird happening when I search for things that don’t get a lot of results. When it happened again earlier this week, I finally grabbed a screenshot:

So, here I am searching for something related to the (relatively obscure, relatively progressive) religious denomination I belong to, and when DDG (or maybe Bing) couldn’t find anything related to the specific thing I was searching for, the first text result that it gave me was the best result it could find for a subset of my search matched with the town I live in: Lexington, Kentucky.

🔗 linkblog: They’re putting A1 in the classrooms.

This video has been on my mind all morning, and it makes me so sad.

moral surrender, the environment, and generative AI

Last week, I blogged about how the purported inevitability of generative AI gets used to sidestep moral concerns about it. Earlier this morning, I shared a link to a story from The Verge that illustrates that perfectly, and so I wanted to write just a little bit more about it.

First, let’s quote some more from Jacques Ellul, whom I referenced in the last post (and whom I’ve just been referencing a lot in general recently). Right before the passage I quoted in last week’s post—a passage from his 1980 essay The Power of Technique and the Ethics of Non-Power, he had this to say:

🔗 linkblog: Take It Down Act nears passage; critics warn Trump could use it against enemies

NCII enrages me, but I’m skeptical of this as a solution.

🔗 linkblog: OpenAI helps spammers plaster 80,000 sites with messages that bypassed filters

Sure this looks bad, but heaven forbid the U.S. lose AI leadership or whatever.

🔗 linkblog: Trump says the future of AI is powered by coal

This sort of thing reminds me why I’m so entrenched in my skepticism of generative AI. There’s an uncritical insistence that the world needs AI, that America should be first in AI, and that we’re just going to have to increase energy production instead of ask ourselves if that’s worth the cost. Credit to Trump, I guess, for illustrating just how dangerous all these attitudes are.

two things that bug me about arguments that generative AI is inevitable or whatever

I don’t know that “inevitable” is the right word to use in the title of this post. What I’m trying to evoke is that specific argument about generative AI that now that it’s here, there’s no going back, so the only real/responsible/whatever choice is to learn to use it properly, teach others to use it, accept it as part of life, etc. These are the arguments that the world is forever changed and that there’s no going back—that the genie is out of the bottle so we might as well harness it.

🔗 linkblog: OpenAI and Anthropic are fighting over college students with free AI

I was already planning to voice skepticism about Apple partnerships with universities in a manuscript I’m writing, but now I’ve got this to cite as well.

🔗 linkblog: Trump’s new tariff math looks a lot like ChatGPT’s

Well, if he’s going to ruin the economy, at least he can come by his strategy in the dumbest possible way.

🔗 linkblog: Best printer 2025: just buy a Brother laser printer, the winner is clear, middle finger in the air

I didn’t need to read a printer recommendation article today, but I’m so glad I did. The rage about the world we live in is great.

🔗 linkblog: 'I Want to Make You Immortal:' How One Woman Confronted Her Deepfakes Harasser

Studio Ghibli pictures are neat (legitimately! it’s one of the first generative AI things that’s tempted me!), but these deepfakes are the price we pay for them, and I think that’s too high a price.

🔗 linkblog: How crawlers impact the operations of the Wikimedia projects

I think this is a good example of why digital labor is a particularly salient critique of generative AI. Yes, Wikimedia content is licensed, but not as strictly as copyrighted works. Yet, ripping off of their work is arguably worse than grabbing some copyrighted works.

🔗 linkblog: OpenAI's Studio Ghibli meme factory is an insult to art itself

I skipped over this article the first few times I saw it, but I think there’s some good stuff in here. Is defying Ghibli the point?

🔗 linkblog: OpenAI's viral Studio Ghibli moment highlights AI copyright concerns | TechCrunch

Generative AI products make me mad, I don’t like them, and I’m not going to defend them. That said, if this gets framed as a copyright problem, is there any way to give Studio Ghibli (or Pixar or the Seuss estate) power to cry foul here that doesn’t also shut down fan art, parodies, and the like? I’m skeptical, and that’s why I think “labor” is the more productive—if more legally ambiguous—framing here.

thoughts on academic labor, digital labor, intellectual property, and generative AI

Thanks to this article from The Atlantic that I saw on Bluesky, I’ve been able to confirm something that I’ve long assumed to be the case: that my creative and scholarly work is being used to train generative AI tools. More specifically, I used the searchable database embedded in the article to search for myself and find that at least eight of my articles (plus two corrections) are available in the LibGen pirate library—which means that they were almost certainly used by Meta to train their Llama LLM.

policy and the prophetic voice: generative AI and deepfake nudes

This is a mess of a post blending thoughts on tech policy with religious ideas and lacking the kind of obvious throughline or structure that I’d like it to have. It’s also been in my head for a couple of weeks, and it’s time to release it into the world rather than wait for it to be something better. So, here it is:

I am frustrated with generative AI technology for many reasons, but one of the things at the top of that list is the knowledge that today’s kids are growing up in a world where it is possible—even likely—that their middle and high school experiences are going to involve someone using generative AI tools to produce deepfake nudes (or other non-consensual intimate imagery—NCII) of them. See, for example, this horrifying story from the New York Times last April.